Modern systems rarely begin with perfect architecture.

Most real systems evolve from:

- a working prototype,

- an operational script,

- a single service,

- or a growing runtime loop.

The real engineering challenge is not building a perfect greenfield design.

The real challenge is:

evolving a live operational system safely without breaking it.

That process is what I call:

Incremental Decomposition of a Live Runtime SystemThe Common Trap

Many developers eventually hit this moment:

“This service became too large.”Then the dangerous ideas start:

- “Let’s rewrite everything.”

- “Let’s implement Clean Architecture.”

- “Let’s rebuild using microservices.”

- “Let’s move to CQRS/Event Sourcing.”

Most systems fail here.

Why?

Because:

- operational behavior is already working,

- runtime assumptions already exist,

- hidden coupling already formed,

- production logic already evolved organically.

Large rewrites usually introduce:

- instability,

- regressions,

- unclear ownership,

- endless refactor cycles.

A Better Approach

Instead of rewriting:

progressively extract responsibilities.

One boundary at a time.

One stable contract at a time.

One operational behavior at a time.

Real Example — Trading Runtime Evolution

A trading bot often starts like this:

Program.cs

-> fetch data

-> generate signal

-> validate risk

-> place order

-> update state

-> log everythingAt first this is fine.

But eventually:

- stop-loss logic grows,

- portfolio rules grow,

- runtime recovery appears,

- execution tracking appears,

- reconciliation becomes necessary.

Now the single service becomes:

operationally dense.

The Wrong Move

The wrong response is:

“Rewrite the entire platform.”The correct response is:

“What responsibility can be safely extracted next?”The Decomposition Pattern

A mature decomposition sequence often looks like:

Step 1 — Separate Signal Generation

Strategy

decides

TradingService

orchestratesStep 2 — Separate Risk Governance

RiskEngine

validates

TradingService

gathers runtime contextStep 3 — Separate Execution

ExecutionService

places broker ordersStep 4 — Separate Lifecycle Tracking

TradeLifecycleService

records audit trailStep 5 — Separate Runtime State

PositionStateService

manages runtime transitionsStep 6 — Separate Recovery

RecoveryService

reconciles broker/runtime stateStep 7 — Separate Runtime Coordination

TradingRuntimeService

owns orchestration loopThe Key Insight

Notice something important:

No rewrite occurred.The runtime stayed operational the entire time.

That is critical.

Because architecture should evolve:

under operational pressure.

Not in isolation.

Why Incremental Decomposition Works

This approach provides:

1. Operational Stability

The system continues running while architecture improves.

2. Smaller Blast Radius

Each extraction changes only one responsibility.

Failures become easier to isolate.

3. Better Runtime Understanding

You discover real system boundaries from:

- runtime behavior,

- operational pain,

- scaling pressure,

- recovery needs.

Not from theoretical diagrams.

4. Cleaner Ownership

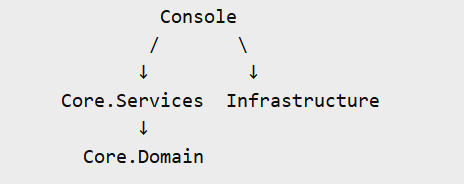

Eventually the system becomes:

Runtime Coordinator

orchestrates

Governance Services

validate

Workflow Services

coordinate

Execution Services

execute

Recovery Services

reconcileAt that point:

- reasoning improves,

- testing improves,

- extensibility improves,

- future capabilities emerge naturally.

The Most Important Engineering Skill

Most developers learn:

- frameworks,

- patterns,

- syntax.

Far fewer learn:

controlled evolution of operational systems.

That skill matters more in real engineering environments.

Because most enterprise systems are not rewritten.

They evolve.

When To Stop Refactoring

This is equally important.

Eventually you reach:

diminishing returns.

At that point:

- stop extracting services,

- stop renaming abstractions,

- stop chasing “perfect architecture.”

Instead:

- run the system,

- observe failures,

- validate recovery,

- analyze logs,

- study runtime behavior.

Operational pressure should guide the next evolution.

Final Thought

Good architecture is not:

- maximum abstraction,

- maximum patterns,

- or maximum complexity.

Good architecture is:

clear responsibility boundaries that evolved safely under real operational conditions.

That is how live runtime systems mature professionally.