Here is the receipe;

Month: January 2022

Setting Traefik on unRAID

This is a basic Traefik setup. Follow these steps to setup Traefik as reverse proxy on unRAID.

We will be using Traefik 2.x as reverse proxy on unRAID v 6.9.x. we will be setting up unRAID ui and Traefik dashboard to show traffic can be routed to any container running on unRAID.

DNS records configuration

We need to create DNS records, all pointing to unRAID box. We will be using unRAID default “local” domain running on 192.168.1.20. Since we own foo.com domain so our DNS records would be;

tower.local.foo.com -> 192.168.1.20

traefik-dashboard.local.foo.com -> 192.168.1.20

How and where to configure these depends on the DNS server, for example PI-HOLE etc.

Reconfiguring unRAID HTTP Port

unRAID web ui is using port 80 but Traefik will be listening on port 80. We need to reconfigure this port.

Go to Settings -> Management Access, and change HTTP port to 8080 from 80.

In case Traefik container is not working, we can always access unRAID server at http://192.168.1.20:8080.

Traefik configuration

In order to configure Trafik we will be using a mix of dynamic configuration (via Docker labels), and static configuration (via configuration files).

Place the following yml configuration files in your appdata share.

appdata/traefik/traefik.yml

api:

dashboard: true

insecure: true

entryPoints:

http:

address: ":80"

providers:

docker: {}

file:

filename: /etc/traefik/dynamic_conf.yml

watch: true

appdata/traefik/dynamic_conf.yml

http:

routers:

unraid:

entryPoints:

- http

service: unraid

rule: "Host(`tower.local.foo.com`)"

services:

unraid:

loadBalancer:

servers:

- url: "http://192.168.1.20:8080/"

Make sure yml has two space indentation.

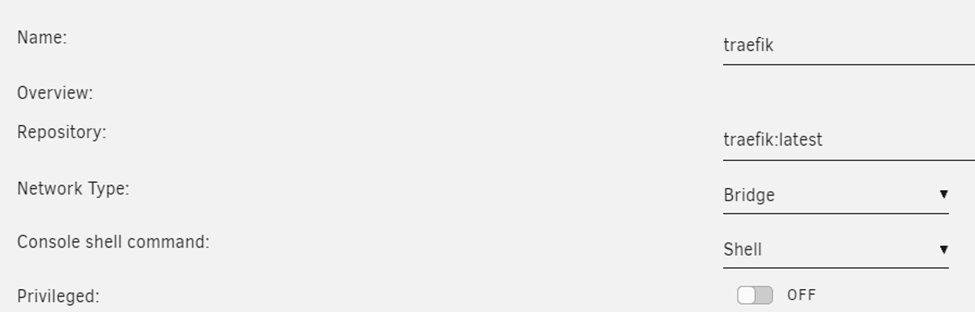

Setup Traefik Container

Go to the Docker tab in unRAID and ADD CONTAINER.

We need to fill in the following configuration:

Name: traefik

Repository: traefik:latest

Network Type: bridge

Add a port mapping from 80 → 80, so that Traefik can listen for incoming HTTP traffic.

Add a path where we mount our /mnt/user/appdata/traefik to /etc/traefik so that Traefik can actually read our configuration.

Add another path where we mount our Docker socket /var/run/docker.sock to /var/run/docker.sock. Read-only is sufficient here.

This is required so Traefik can listed for new containers and read their labels, which is used for the dynamic configuration part. We are using this exact mechanism to expose the Treafik dashboard now.

Add a label

• key = traefik.http.routers.api.entrypoints

• value = http

Add another label

• key = traefik.http.routers.api.service

• value = api@internal

And a final label

• key = traefik.http.routers.api.rule

• value = Host(`traefik-dashboard.local.foo.com`)

Our container configuration should look like this;

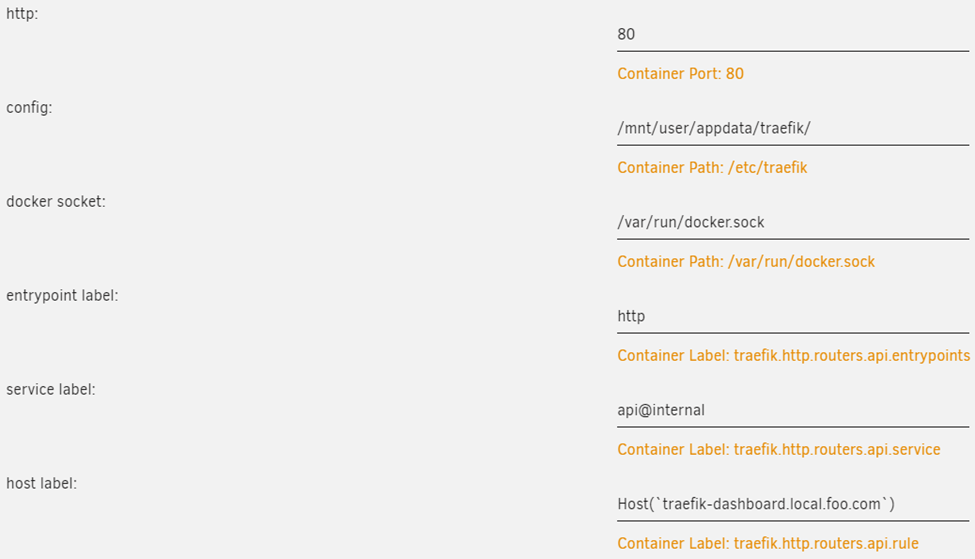

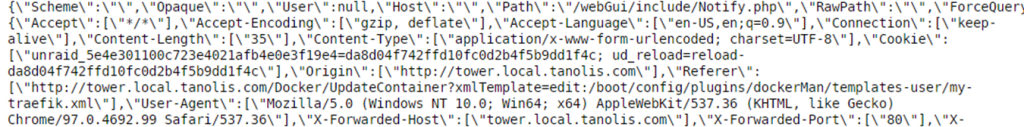

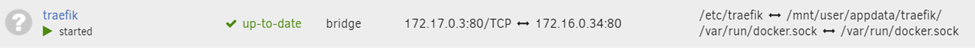

Run container, and view container log to make sure its running. You will see something like this;

The screen will scroll with new logs. Traefik is up and running.

Open browser, we are able to access unRAID at http://tower.local.foo.com, and the Traefik dashboard at http://traefik-dashboard.local.foo.com.

Proxying any Container

In order to add another container to our Traefik configuration we simply need to add a single label to it.

Assuming we have a Portainer container running we can add a label with

- key =

traefik.http.routers.portainer.rule - value = Host(`portainer.local.foo.com`)

If our container is only exposing a single port, Traefik is smart enough to pick it up, and no other configuration is required.

If Portainer container would expose multiple ports, but the webUI is accessible on port 3900 we would need to add an additional label with

- key =

traefik.http.services.portainer.loadbalancer.server.port - value = 8080

For external hosts to take advantage of terafik, point their DNS entry to traefik host. Obviously we have to define router and services in traefik dynamic file.

Resources

SQL Server basic command

This is a handy list of basic commands;

Append a new column to table

ALTER TABLE table_name

ADD column_name data_type column_constraint;Append multiple columns to a table

ALTER TABLE table_name

ADD

column_name_1 data_type_1 column_constraint_1,

column_name_2 data_type_2 column_constraint_2,

...,

column_name_n data_type_n column_constraint_n;Create a new table

CREATE TABLE table_name (

colulmn_name data_type_1 IDENTITY PRIMARY KEY,

colulmn_name data_type_2 NOT NULL,

column_name data_type_3 NULL

);Move Pi-Hole databases and list to different location

Create a new folder in new location, for example pihole-db.

mkdir pihole-db

# make sure folder has this permission

chmod 775 pihole-db

# change user/group to pihole on this folder

chown pihole:pihole pihole-db

We will be creating symlink (symbolic link) by copying database to pihole-db.

https://unix.stackexchange.com/questions/218557/how-to-change-ownership-of-symbolic-links

# Pihole-FTL.db

# stop Pihole service

sudo service pihole-FTL stop

cp /etc/pihole/pihole-FTL.db /srv/pihole-data

chown pihole:pihole pihole-FTL.db

# rm /etc/pihole/pihole-FTL.db

# create link in /etc/pihole

ln -s /srv/pihole-db/pihole-FTL.db pihole-FTL.db

# change owner/group of symlinks

sudo chown -h pihole:pihole pihole-FTL.db

# start the service

sudo service pihole-FTL start

# check service status

# systemctl status pihole-FTL

Open browser, navigate to a site and see if pihole-FTL works.

Pihole-FTL started working. Let’s move others;

# gravity.db

sudo service pihole-FTL stop

cp /etc/pihole/gravity.db /srv/pihole-db

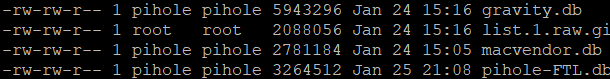

ls -l /srv/pihole-db

chown pihole:pihole /srv/pihole-db/gravity.db

rm /etc/pihole/gravity.db

# create symlink in /etc/pihole

ln -s /srv/pihole-db/gravity.db gravity.db

# change owner/group of symlinks

sudo chown -h pihole:pihole gravity.db

# verify

sudo service pihole-FTL start

# macvendor.db

sudo service pihole-FTL stop

cp /etc/pihole/macvendor.db /srv/pihole-db

ls -l /srv/pihole-db

chown pihole:pihole /srv/pihole-db/macvendor.db

rm /etc/pihole/macvendor.db

# create symlink in /etc/pihole

ln -s /srv/pihole-db/macvendor.db macvendor.db

sudo chown -h pihole:pihole macvendor.db

# verify

sudo service pihole-FTL start

# list.1.raw.githubusercontent.com.domains

sudo service pihole-FTL stop

cp /etc/pihole/list.1.raw.githubusercontent.com.domains /srv/pihole-db

ls -l /srv/pihole-db

rm /etc/pihole/list.1.raw.githubusercontent.com.domains

# create symlink in /etc/pihole

ln -s /srv/pihole-db/list.1.raw.githubusercontent.com.domains list.1.raw.githubusercontent.com.domains

# verify

sudo service pihole-FTL start

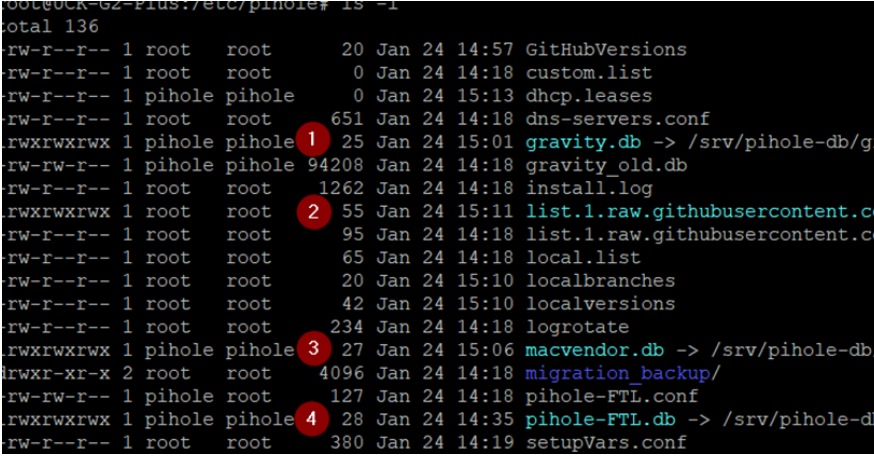

Make sure you have changed owner and group of sym(Symbolic) links of these databases.

sudo chown -h pihole:pihole pihole-FTL.db

sudo chown -h pihole:pihole macvendor.db

sudo chown -h pihole:pihole gravity.db

Make sure you can see these permissions;

To reset, run this command;

chmod 664 gravity.db

Here is your modified file system;

To rebuild gravity database, run this and see the time stamp;

pihole -g

https://discourse.pi-hole.net/t/gravity-database/46182

For macvendor database refer to this;

Resources

https://www.cyberciti.biz/faq/linux-log-files-location-and-how-do-i-view-logs-files/

Setting up Pi-hole as a recursive DNS server solution

Install this;

sudo apt install unboundrun this command;

nano /etc/unbound/unbound.conf.d/pi-hole.confcopy and paste this from pi-hole site;

server:

# If no logfile is specified, syslog is used

# logfile: "/var/log/unbound/unbound.log"

verbosity: 0

interface: 127.0.0.1

port: 5335

do-ip4: yes

do-udp: yes

do-tcp: yes

# May be set to yes if you have IPv6 connectivity

do-ip6: no

# You want to leave this to no unless you have *native* IPv6. With 6to4 and

# Terredo tunnels your web browser should favor IPv4 for the same reasons

prefer-ip6: no

# Use this only when you downloaded the list of primary root servers!

# If you use the default dns-root-data package, unbound will find it automatically

#root-hints: "/var/lib/unbound/root.hints"

# Trust glue only if it is within the server's authority

harden-glue: yes

# Require DNSSEC data for trust-anchored zones, if such data is absent, the zone becomes BOGUS

harden-dnssec-stripped: yes

# Don't use Capitalization randomization as it known to cause DNSSEC issues sometimes

# see https://discourse.pi-hole.net/t/unbound-stubby-or-dnscrypt-proxy/9378 for further details

use-caps-for-id: no

# Reduce EDNS reassembly buffer size.

# IP fragmentation is unreliable on the Internet today, and can cause

# transmission failures when large DNS messages are sent via UDP. Even

# when fragmentation does work, it may not be secure; it is theoretically

# possible to spoof parts of a fragmented DNS message, without easy

# detection at the receiving end. Recently, there was an excellent study

# >>> Defragmenting DNS - Determining the optimal maximum UDP response size for DNS <<<

# by Axel Koolhaas, and Tjeerd Slokker (https://indico.dns-oarc.net/event/36/contributions/776/)

# in collaboration with NLnet Labs explored DNS using real world data from the

# the RIPE Atlas probes and the researchers suggested different values for

# IPv4 and IPv6 and in different scenarios. They advise that servers should

# be configured to limit DNS messages sent over UDP to a size that will not

# trigger fragmentation on typical network links. DNS servers can switch

# from UDP to TCP when a DNS response is too big to fit in this limited

# buffer size. This value has also been suggested in DNS Flag Day 2020.

edns-buffer-size: 1232

# Perform prefetching of close to expired message cache entries

# This only applies to domains that have been frequently queried

prefetch: yes

# One thread should be sufficient, can be increased on beefy machines. In reality for most users running on small networks or on a single machine, it should be unnecessary to seek performance enhancement by increasing num-threads above 1.

num-threads: 1

# Ensure kernel buffer is large enough to not lose messages in traffic spikes

so-rcvbuf: 1m

# Ensure privacy of local IP ranges

private-address: 192.168.0.0/16

private-address: 169.254.0.0/16

private-address: 172.16.0.0/12

private-address: 10.0.0.0/8

private-address: fd00::/8

private-address: fe80::/10

save file CTRL+X.

Start local recursive server and test that it’s operational;

sudo service unbound restart

dig pi-hole.net @127.0.0.1 -p 5335

The first query may be quite slow, but subsequent queries, also to other domains under the same TLD, should be fairly quick.

You should also consider adding

edns-packet-max=1232to a config file like /etc/dnsmasq.d/99-edns.conf to signal FTL to adhere to this limit.

Test Valiation

You can test DNSSEC validation using

dig sigfail.verteiltesysteme.net @127.0.0.1 -p 5335

dig sigok.verteiltesysteme.net @127.0.0.1 -p 5335

The first command should give a status report of SERVFAIL and no IP address. The second should give NOERROR plus an IP address.

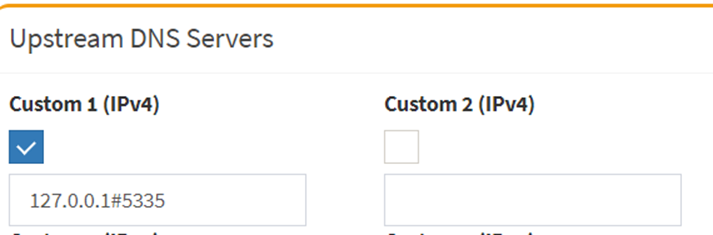

Configure Pi-hole

Finally, configure Pi-hole to use your recursive DNS server by specifying 127.0.0.1#5335 as the Custom DNS (IPv4):

Make sure this is the only record you have. For further info, refer to this;