4

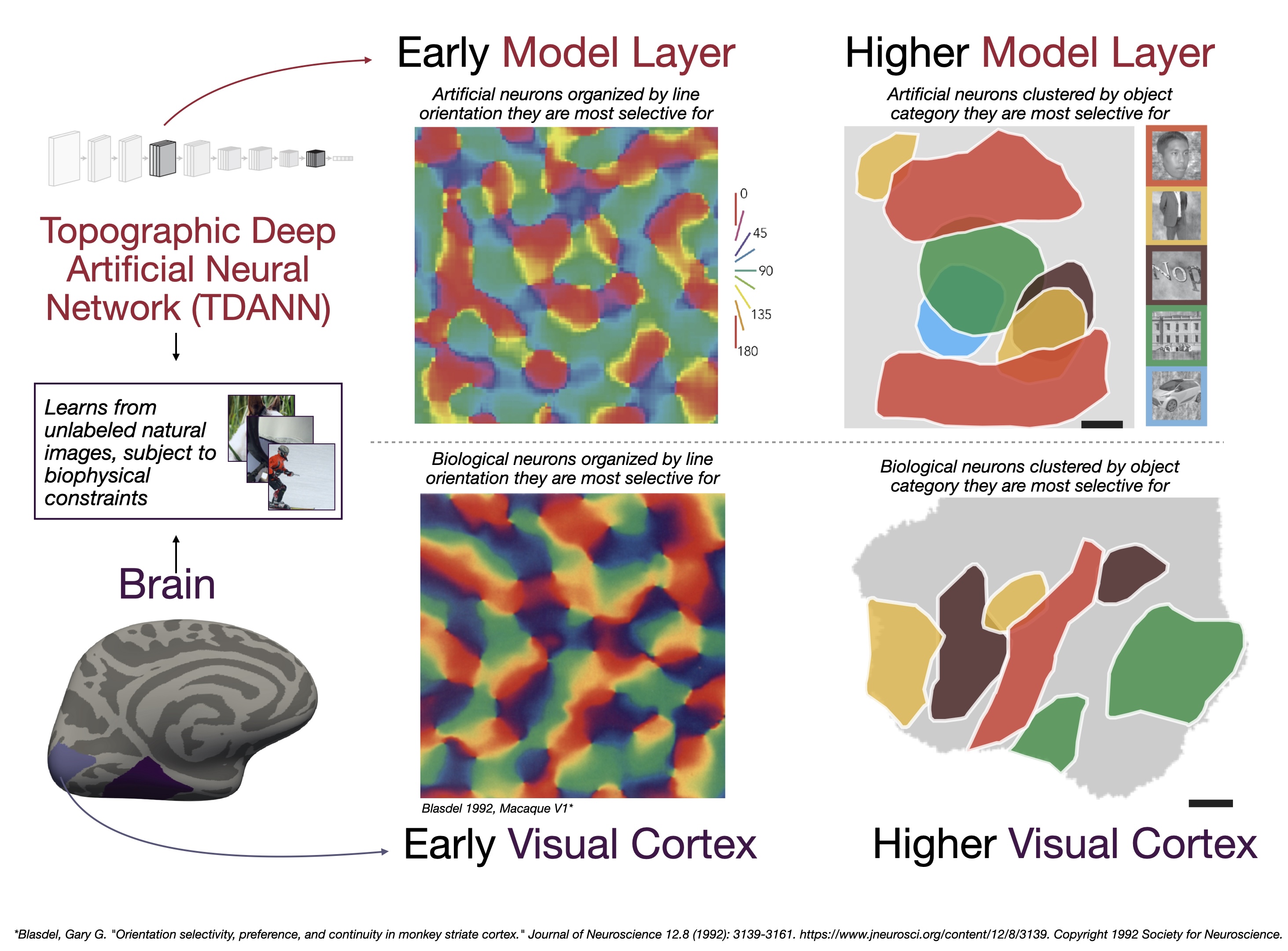

Meta has taken a bold step into the future of neuroscience with the release of TRIBE v2—an open-source AI model that can simulate human brain activity across vision, hearing, and language. What makes this breakthrough remarkable isn’t just its scale, but its performance: in some cases, its synthetic predictions outperform actual fMRI brain scans.

This signals a potential turning point where software begins to rival—and even replace—traditional brain imaging experiments.

🚀 What TRIBE v2 Actually Does

TRIBE v2 is designed to model how the brain responds to different stimuli—like images, sounds, and text—without needing a human subject inside an MRI machine.

Here’s what sets it apart:

- Massive scale-up in data and scope

- Trained on 1,000+ hours of brain recordings

- Expanded from 1,000 → 70,000 brain regions

- Built using data from 700+ individuals (vs. just 4 in v1)

- Cross-modal intelligence

- Simulates neural responses across:

- 👁️ Vision

- 👂 Hearing

- 🗣️ Language

- Simulates neural responses across:

- High-fidelity predictions

- Its outputs align with population-level brain activity

- In some cases, cleaner than real fMRI scans, which are often noisy due to:

- Heartbeats

- Movement

- Scanner artifacts

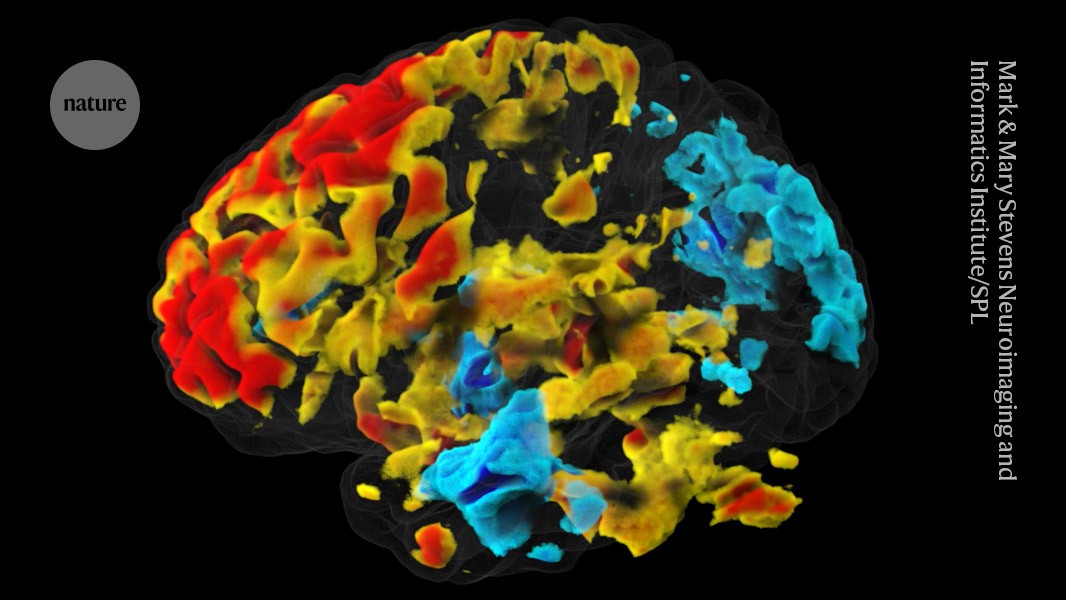

🧪 A Surprising Result: AI vs. Real Brain Scans

One of the most striking findings is that TRIBE v2 can outperform actual fMRI data in predicting brain activity patterns.

That sounds counterintuitive—until you consider:

- fMRI scans are inherently noisy and indirect

- AI models can produce clean, idealized signals

- Aggregated training across hundreds of people removes individual variability

In effect, TRIBE v2 creates a “denoised, generalized brain”—something neuroscientists have never had access to before.

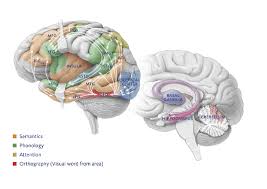

🧠 Reproducing Decades of Neuroscience—Without Scans

Perhaps the most impressive capability: TRIBE v2 can rediscover known brain mappings purely in software.

Without running new scans, it correctly identified:

- Face-processing regions

- Speech-related areas

- Text and language centers

This means the model has internalized fundamental principles of brain organization—a milestone for computational neuroscience.

🔓 Fully Open-Source (and That’s a Big Deal)

Meta didn’t just publish a paper—they released:

- ✅ Model weights

- ✅ Source code

- ✅ Live demo environment

This dramatically lowers the barrier to entry. Researchers no longer need:

- Access to expensive MRI machines

- Complex experimental setups

- Large subject pools

Instead, they can run virtual brain experiments on demand.

⚡ Why This Matters (The AlphaFold Moment?)

This could be neuroscience’s version of AlphaFold.

Before AlphaFold:

- Protein research required years of lab work

After AlphaFold:

- Structures can be predicted in minutes

TRIBE v2 could trigger a similar shift:

| Traditional Neuroscience | With TRIBE v2 |

|---|---|

| Expensive MRI scans | Virtual simulations |

| Weeks/months per study | Seconds/minutes |

| Limited sample sizes | Scalable datasets |

| High noise levels | Clean predictions |

⚠️ Important Caveats

Despite the excitement, this isn’t a full replacement for real neuroscience (yet):

- It models average brain behavior, not individual differences

- It depends heavily on training data quality

- Real-world validation is still essential

Think of it as a powerful accelerator, not a total substitute.

🧭 The Bigger Picture

TRIBE v2 hints at a future where:

- Brain research becomes compute-driven instead of hardware-limited

- Hypotheses can be tested before involving human subjects

- AI helps uncover patterns we might never detect manually

For someone like you—working in Azure + AI systems design—this is also a signal:

👉 The next wave of AI isn’t just language or vision—it’s biological system simulation at scale.

💡 Bottom Line

TRIBE v2 is more than a model—it’s a shift in how we approach understanding the brain.

If it continues to evolve, we may soon reach a point where:

- Running a neuroscience experiment

- Feels more like running a cloud workload

And that’s a profound change.